Are you getting error creating default bridge network when you run Docker?

Docker is a containerization platform that is used to create and run the containers.

At Bobcares, we often get requests on Docker errors, as a part of our Docker Hosting Support.

Today, we’ll see how our Support Engineers fix this Docker error for our customers.

Explore more about Docker

Docker is a tool that is designed to benefit both developers and system administrators. It is a containerization platform that packages applications and all its dependencies together form a container.

And containers are those which help modularize services/applications. It is a package of all our code and its dependencies. So, it runs faster and is more reliable when used on different platforms.

But we encounter many errors while dealing with docker. Today, let’s see how our Engineers are going to fix this error related to Docker.

How we fix Docker error creating default bridge network?

Recently, one of our customers approached us with an error message ‘Error creating default ”bridge” network: cannot create network‘.

Our Support Engineers found that the customer is facing the issue due to a change in data structures in network code. This occurred while he upgrading the OS.

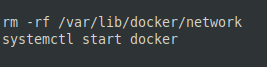

So, our Engineers deleted the network and started the docker.

This fixed the problem.

In another case, we went through the same process. But it still the error was persisting. So we deleted all the sockets and interface.

rm -rf /var/docker/network/*

mkdir /var/docker/network/filesThen we started the docker using the command,

systemctl start dockerBut the containers refused to work since their sockets are gone. So we deleted all the containers by running the command,

docker ps -a | cut -d' ' -f 1 | xargs -n 1 echo docker rm -fAnd then we recreated them.

Finally, this fixed the error.

Note: Here, it was stateless containers, and we confirmed with the customer before deleting the containers.

Also, the fix may vary depending on the scenario.

[Need any assistance in fixing Docker errors? – We can help you]

Conclusion

In short, when dealing with Docker we may encounter error creating default bridge network. This mainly occurs due to a change in data structures in network code. Also, we saw how our Support Engineers fix this for our customers.

Are you using Docker based apps?

There are proven ways to get even more out of your Docker containers! Let us help you.

Spend your time in growing business and we will take care of Docker Infrastructure for you.

GET STARTED

var google_conversion_label = «owonCMyG5nEQ0aD71QM»;

I’m using Fedora release 33 (Thirty Three)

Docker version is Docker version 20.10.0, build 7287ab3

First I ran docker system prune and since then docker daemon failed to start.

I ran systemctl start docker command and got

Job for docker.service failed because the control process exited with error code.

See "systemctl status docker.service" and "journalctl -xe" for details.

and then systemctl status docker.service I got

● docker.service - Docker Application Container Engine

Loaded: loaded (/usr/lib/systemd/system/docker.service; enabled; vendor pr>

Active: failed (Result: exit-code) since Wed 2020-12-09 11:10:58 IST; 15s >

TriggeredBy: ● docker.socket

Docs: https://docs.docker.com

Process: 10391 ExecStart=/usr/bin/dockerd -H fd:// --containerd=/run/contai>

Main PID: 10391 (code=exited, status=1/FAILURE)

Dec 09 11:10:58 barad-laptop systemd[1]: docker.service: Scheduled restart job,>

Dec 09 11:10:58 barad-laptop systemd[1]: Stopped Docker Application Container E>

Dec 09 11:10:58 barad-laptop systemd[1]: docker.service: Start request repeated>

Dec 09 11:10:58 barad-laptop systemd[1]: docker.service: Failed with result 'ex>

Dec 09 11:10:58 barad-laptop systemd[1]: Failed to start Docker Application Con>

Then sudo dockerd --debug and got

failed to start daemon: Error initializing network controller: Error creating default "bridge" network: Failed to program NAT chain: ZONE_CONFLICT: 'docker0' already bound to a zone

Related to this Github issue

- This is a bug report

- This is a feature request

- I searched existing issues before opening this one

Expected behavior

sudo systemctl start docker should correctly start docker.

Actual behavior

It gives an error:

Job for docker.service failed because the control process exited with error code. See "systemctl status docker.service" and "journalctl -xe" for details.

journalctl -xe gives:

May 19 08:45:17 my.server.tld dockerd[10597]: time="2021-05-19T08:45:17.039853271Z" level=debug msg="Firewalld passthrough: ipv4, [-t nat -D OUTPUT -m addrtype --dst-type LOCAL -j DOCKER]"

May 19 08:45:17 my.server.tld firewalld[128]: WARNING: COMMAND_FAILED: '/usr/sbin/iptables -w10 -t nat -D OUTPUT -m addrtype --dst-type LOCAL -j DOCKER' failed: iptables: No chain/target/match by that name.

May 19 08:45:17 my.server.tld dockerd[10597]: time="2021-05-19T08:45:17.049280804Z" level=debug msg="Firewalld passthrough: ipv4, [-t nat -D PREROUTING]"

May 19 08:45:17 my.server.tld firewalld[128]: WARNING: COMMAND_FAILED: '/usr/sbin/iptables -w10 -t nat -D PREROUTING' failed: iptables: Bad rule (does a matching rule exist in that chain?).

May 19 08:45:17 my.server.tld dockerd[10597]: time="2021-05-19T08:45:17.058288942Z" level=debug msg="Firewalld passthrough: ipv4, [-t nat -D OUTPUT]"

May 19 08:45:17 my.server.tld firewalld[128]: WARNING: COMMAND_FAILED: '/usr/sbin/iptables -w10 -t nat -D OUTPUT' failed: iptables: Bad rule (does a matching rule exist in that chain?).

May 19 08:45:17 my.server.tld dockerd[10597]: time="2021-05-19T08:45:17.068605847Z" level=debug msg="Firewalld passthrough: ipv4, [-t nat -F DOCKER]"

May 19 08:45:17 my.server.tld dockerd[10597]: time="2021-05-19T08:45:17.078732710Z" level=debug msg="Firewalld passthrough: ipv4, [-t nat -X DOCKER]"

May 19 08:45:17 my.server.tld dockerd[10597]: time="2021-05-19T08:45:17.088858154Z" level=debug msg="Firewalld passthrough: ipv4, [-t filter -F DOCKER]"

May 19 08:45:17 my.server.tld dockerd[10597]: time="2021-05-19T08:45:17.099074239Z" level=debug msg="Firewalld passthrough: ipv4, [-t filter -X DOCKER]"

May 19 08:45:17 my.server.tld dockerd[10597]: time="2021-05-19T08:45:17.109451286Z" level=debug msg="Firewalld passthrough: ipv4, [-t filter -F DOCKER-ISOLATION-STAGE-1]"

May 19 08:45:17 my.server.tld dockerd[10597]: time="2021-05-19T08:45:17.119789596Z" level=debug msg="Firewalld passthrough: ipv4, [-t filter -X DOCKER-ISOLATION-STAGE-1]"

May 19 08:45:17 my.server.tld dockerd[10597]: time="2021-05-19T08:45:17.129741289Z" level=debug msg="Firewalld passthrough: ipv4, [-t filter -F DOCKER-ISOLATION-STAGE-2]"

May 19 08:45:17 my.server.tld dockerd[10597]: time="2021-05-19T08:45:17.139899575Z" level=debug msg="Firewalld passthrough: ipv4, [-t filter -X DOCKER-ISOLATION-STAGE-2]"

May 19 08:45:17 my.server.tld dockerd[10597]: time="2021-05-19T08:45:17.149545851Z" level=debug msg="Firewalld passthrough: ipv4, [-t filter -F DOCKER-ISOLATION]"

May 19 08:45:17 my.server.tld firewalld[128]: WARNING: COMMAND_FAILED: '/usr/sbin/iptables -w10 -t filter -F DOCKER-ISOLATION' failed: iptables: No chain/target/match by that name.

May 19 08:45:17 my.server.tld dockerd[10597]: time="2021-05-19T08:45:17.161702471Z" level=debug msg="Firewalld passthrough: ipv4, [-t filter -X DOCKER-ISOLATION]"

May 19 08:45:17 my.server.tld firewalld[128]: WARNING: COMMAND_FAILED: '/usr/sbin/iptables -w10 -t filter -X DOCKER-ISOLATION' failed: iptables: No chain/target/match by that name.

May 19 08:45:17 my.server.tld dockerd[10597]: time="2021-05-19T08:45:17.189553198Z" level=debug msg="Firewalld passthrough: ipv4, [-t nat -n -L DOCKER]"

May 19 08:45:17 my.server.tld dockerd[10597]: time="2021-05-19T08:45:17.201206779Z" level=debug msg="Firewalld passthrough: ipv4, [-t nat -N DOCKER]"

May 19 08:45:17 my.server.tld dockerd[10597]: time="2021-05-19T08:45:17.211888849Z" level=debug msg="Firewalld passthrough: ipv4, [-t filter -n -L DOCKER]"

May 19 08:45:17 my.server.tld dockerd[10597]: time="2021-05-19T08:45:17.221932805Z" level=debug msg="Firewalld passthrough: ipv4, [-t filter -N DOCKER]"

May 19 08:45:17 my.server.tld dockerd[10597]: time="2021-05-19T08:45:17.231964707Z" level=debug msg="Firewalld passthrough: ipv4, [-t filter -n -L DOCKER-ISOLATION-STAGE-1]"

May 19 08:45:17 my.server.tld dockerd[10597]: time="2021-05-19T08:45:17.241278619Z" level=debug msg="Firewalld passthrough: ipv4, [-t filter -N DOCKER-ISOLATION-STAGE-1]"

May 19 08:45:17 my.server.tld dockerd[10597]: time="2021-05-19T08:45:17.250686355Z" level=debug msg="Firewalld passthrough: ipv4, [-t filter -n -L DOCKER-ISOLATION-STAGE-2]"

May 19 08:45:17 my.server.tld dockerd[10597]: time="2021-05-19T08:45:17.261410608Z" level=debug msg="Firewalld passthrough: ipv4, [-t filter -N DOCKER-ISOLATION-STAGE-2]"

May 19 08:45:17 my.server.tld dockerd[10597]: time="2021-05-19T08:45:17.274901372Z" level=debug msg="Firewalld passthrough: ipv4, [-t filter -C DOCKER-ISOLATION-STAGE-1 -j RETURN]"

May 19 08:45:17 my.server.tld dockerd[10597]: time="2021-05-19T08:45:17.293487689Z" level=debug msg="Firewalld passthrough: ipv4, [-A DOCKER-ISOLATION-STAGE-1 -j RETURN]"

May 19 08:45:17 my.server.tld dockerd[10597]: time="2021-05-19T08:45:17.317872917Z" level=debug msg="Firewalld passthrough: ipv4, [-t filter -C DOCKER-ISOLATION-STAGE-2 -j RETURN]"

May 19 08:45:17 my.server.tld dockerd[10597]: time="2021-05-19T08:45:17.337487620Z" level=debug msg="Firewalld passthrough: ipv4, [-A DOCKER-ISOLATION-STAGE-2 -j RETURN]"

May 19 08:45:17 my.server.tld dockerd[10597]: time="2021-05-19T08:45:17.360083685Z" level=debug msg="Allocating IPv4 pools for network bridge (6d7ae465f646b2fd1d5ea39d36c9af111670c6e4f91c7b6a922c076050cf0a78)"

May 19 08:45:17 my.server.tld dockerd[10597]: time="2021-05-19T08:45:17.360561939Z" level=debug msg="RequestPool(LocalDefault, 172.17.18.1/24, 172.17.18.0/25, map[], false)"

May 19 08:45:17 my.server.tld dockerd[10597]: time="2021-05-19T08:45:17.361018354Z" level=debug msg="RequestAddress(LocalDefault/172.17.18.0/24/172.17.18.0/25, 172.17.18.1, map[RequestAddressType:com.docker.network.gateway])"

May 19 08:45:17 my.server.tld dockerd[10597]: time="2021-05-19T08:45:17.361908437Z" level=debug msg="Request address PoolID:172.17.18.0/24 App: ipam/default/data, ID: LocalDefault/172.17.18.0/24, DBIndex: 0x0, Bits: 256, Unselected: 254, Sequence: (0x80000000, 1)->(0x0, 6)->(0x1, 1)->end Curr:0 Serial:false PrefAddress:172.17.18.1

May 19 08:45:17 my.server.tld dockerd[10597]: time="2021-05-19T08:45:17.362397190Z" level=debug msg="Did not find any interface with name docker0: Link not found"

May 19 08:45:17 my.server.tld dockerd[10597]: time="2021-05-19T08:45:17.362935554Z" level=debug msg="Failed to create bridge docker0 via netlink. Trying ioctl"

May 19 08:45:17 my.server.tld dockerd[10597]: time="2021-05-19T08:45:17.368678322Z" level=debug msg="releasing IPv4 pools from network bridge (6d7ae465f646b2fd1d5ea39d36c9af111670c6e4f91c7b6a922c076050cf0a78)"

May 19 08:45:17 my.server.tld dockerd[10597]: time="2021-05-19T08:45:17.369138101Z" level=debug msg="ReleaseAddress(LocalDefault/172.17.18.0/24/172.17.18.0/25, 172.17.18.1)"

May 19 08:45:17 my.server.tld dockerd[10597]: time="2021-05-19T08:45:17.369570818Z" level=debug msg="Released address PoolID:LocalDefault/172.17.18.0/24/172.17.18.0/25, Address:172.17.18.1 Sequence:App: ipam/default/data, ID: LocalDefault/172.17.18.0/24, DBIndex: 0x0, Bits: 256, Unselected: 253, Sequence: (0xc0000000, 1)->(0x0, 6)

May 19 08:45:17 my.server.tld dockerd[10597]: time="2021-05-19T08:45:17.370108243Z" level=debug msg="ReleasePool(LocalDefault/172.17.18.0/24/172.17.18.0/25)"

May 19 08:45:17 my.server.tld dockerd[10597]: time="2021-05-19T08:45:17.370541074Z" level=debug msg="daemon configured with a 15 seconds minimum shutdown timeout"

May 19 08:45:17 my.server.tld dockerd[10597]: time="2021-05-19T08:45:17.370957476Z" level=debug msg="start clean shutdown of all containers with a 15 seconds timeout..."

May 19 08:45:17 my.server.tld dockerd[10597]: time="2021-05-19T08:45:17.371334882Z" level=debug msg="Cleaning up old mountid : start."

May 19 08:45:17 my.server.tld dockerd[10597]: time="2021-05-19T08:45:17.371525551Z" level=debug msg="Cleaning up old mountid : done."

May 19 08:45:17 my.server.tld dockerd[10597]: Error starting daemon: Error initializing network controller: Error creating default "bridge" network: permission denied

Output of docker version:

[root@srv ~]# docker version

Client:

Version: 18.09.1

API version: 1.39

Go version: go1.10.6

Git commit: 4c52b90

Built: Wed Jan 9 19:35:01 2019

OS/Arch: linux/amd64

Experimental: false

error during connect: Get http://%2Fvar%2Frun%2Fdocker.sock/v1.39/version: read unix @->/var/run/docker.sock: read: connection reset by peer

Output of docker info:

[root@srv ~]# docker info

Cannot connect to the Docker daemon at unix:///var/run/docker.sock. Is the docker daemon running?

Additional environment details (AWS, VirtualBox, physical, etc.)

I’m on a CentOS 7.5 VPS:

[root@srv ~]# uname -a

Linux my.server.tld 3.10.0-1160.21.1.vz7.174.13 #1 SMP Thu Apr 22 16:18:59 MSK 2021 x86_64 x86_64 x86_64 GNU/Linux

The only other things running are nginx with php, but I tried stopping those and no success.

I tried various solutions like deleting /var/lib/docker/network/files/local-kv.db or the entire network contents and no success. I tried a more recent version too.

I also tried the top upvoted solution here: #123

ip link add name docker0 type bridge

ip addr add dev docker0 172.17.0.1/16

With and without sudo, the output is:

[root@srv ~]# ip link add name docker0 type bridge

RTNETLINK answers: Permission denied

/etc/docker/daemon.json is (I also tried without this file at all):

{

"experimental": false, <- tried true as well

"bip": "172.17.18.1/24", <- tried others like 192.168.x.y as well

"fixed-cidr": "172.17.18.1/25",

"debug": true,

"ipv6": false, <- tried true as well

"fixed-cidr-v6": "fd00:dead:beef::/80"

}

Output of ip addr:

[root@srv ~]# ip addr

1: lo: <LOOPBACK,UP,LOWER_UP> mtu 65536 qdisc noqueue state UNKNOWN group default qlen 1000

link/loopback 00:00:00:00:00:00 brd 00:00:00:00:00:00

inet 127.0.0.1/8 scope host lo

valid_lft forever preferred_lft forever

inet6 ::1/128 scope host

valid_lft forever preferred_lft forever

2: venet0: <BROADCAST,POINTOPOINT,NOARP,UP,LOWER_UP> mtu 1500 qdisc noqueue state UNKNOWN group default

link/void

inet 127.0.0.1/32 scope host venet0

valid_lft forever preferred_lft forever

inet MY_EXTERNAL_IP_REDACTED/32 brd MY_EXTERNAL_IP_REDACTED scope global venet0:0

valid_lft forever preferred_lft forever

I’m at my wits’ end here, anyone run into this before?

I use docker for years on my raspi 3b rev 1.2 (with RaspiOS bullseye 11.5). Recently, I don’t know why, the daemon dockerd failed to start. The systemd unit remains in failed status. I can reproduce the error by running dockerd manually and get some logs, but they didn’t help me to understand what’s going on:

➜ ~ sudo dockerd

INFO[2022-12-12T16:51:52.725265278+01:00] Starting up

INFO[2022-12-12T16:51:52.731645021+01:00] parsed scheme: "unix" module=grpc

INFO[2022-12-12T16:51:52.731805647+01:00] scheme "unix" not registered, fallback to default scheme module=grpc

INFO[2022-12-12T16:51:52.731938199+01:00] ccResolverWrapper: sending update to cc: {[{unix:///run/containerd/containerd.sock <nil> 0 <nil>}] <nil> <nil>} module=grpc

INFO[2022-12-12T16:51:52.732012886+01:00] ClientConn switching balancer to "pick_first" module=grpc

INFO[2022-12-12T16:51:52.738021067+01:00] parsed scheme: "unix" module=grpc

INFO[2022-12-12T16:51:52.738182057+01:00] scheme "unix" not registered, fallback to default scheme module=grpc

INFO[2022-12-12T16:51:52.738303723+01:00] ccResolverWrapper: sending update to cc: {[{unix:///run/containerd/containerd.sock <nil> 0 <nil>}] <nil> <nil>} module=grpc

INFO[2022-12-12T16:51:52.738366380+01:00] ClientConn switching balancer to "pick_first" module=grpc

INFO[2022-12-12T16:51:52.893715377+01:00] [graphdriver] using prior storage driver: overlay2

WARN[2022-12-12T16:51:52.906082155+01:00] Unable to find memory controller

INFO[2022-12-12T16:51:52.907810541+01:00] Loading containers: start.

INFO[2022-12-12T16:51:53.156768500+01:00] Default bridge (docker0) is assigned with an IP address 172.17.0.0/16. Daemon option --bip can be used to set a preferred IP address

INFO[2022-12-12T16:51:53.160111835+01:00] stopping event stream following graceful shutdown error="<nil>" module=libcontainerd namespace=moby

failed to start daemon: Error initializing network controller: Error creating default "bridge" network: failed to check bridge interface existence: no buffer space available

I think the main error is

failed to check bridge interface existence: no buffer space available

but I cannot figure out how to fix this

I tried multiple actions:

- Deleting

/var/lib/dockerfolder - Deleting the

docker0virtual interface - Uninstalling/reinstalling all packages (docker-ce, containerd, docker-rootless-extras, etc.)

- Rebooting multiple times (yeah, that seems strange but multiple posts about similar error on the internet mentionned rebooting magically solved the issue)

Note: The IPV6 feature is currently disabled in /etc/docker/daemon.json. I also tried to enable it, without any success.

{

"ipv6": false,

"fixed-cidr-v6": "2a02:XXXX:YYYY:ZZZZ::/80"

}

(Note: I obfuscated my CIDR)

Any help is welcome !

Edit: I updated /boot/cmdline.txt to add following options:

cgroup_memory=1 cgroup_enable=memory

After a reboot, the warning relative to memory controller disappeared. The original error remains unfixed

INFO[2022-12-13T13:21:15.441652580+01:00] Starting up

INFO[2022-12-13T13:21:15.447998677+01:00] parsed scheme: "unix" module=grpc

INFO[2022-12-13T13:21:15.448131021+01:00] scheme "unix" not registered, fallback to default scheme module=grpc

INFO[2022-12-13T13:21:15.448253886+01:00] ccResolverWrapper: sending update to cc: {[{unix:///run/containerd/containerd.sock <nil> 0 <nil>}] <nil> <nil>} module=grpc

INFO[2022-12-13T13:21:15.448313938+01:00] ClientConn switching balancer to "pick_first" module=grpc

INFO[2022-12-13T13:21:15.455202014+01:00] parsed scheme: "unix" module=grpc

INFO[2022-12-13T13:21:15.455321441+01:00] scheme "unix" not registered, fallback to default scheme module=grpc

INFO[2022-12-13T13:21:15.455415712+01:00] ccResolverWrapper: sending update to cc: {[{unix:///run/containerd/containerd.sock <nil> 0 <nil>}] <nil> <nil>} module=grpc

INFO[2022-12-13T13:21:15.455475035+01:00] ClientConn switching balancer to "pick_first" module=grpc

INFO[2022-12-13T13:21:15.574649053+01:00] [graphdriver] using prior storage driver: overlay2

INFO[2022-12-13T13:21:15.587653226+01:00] Loading containers: start.

INFO[2022-12-13T13:21:15.831901578+01:00] Default bridge (docker0) is assigned with an IP address 172.17.0.0/16. Daemon option --bip can be used to set a preferred IP address

INFO[2022-12-13T13:21:15.834550486+01:00] stopping event stream following graceful shutdown error="<nil>" module=libcontainerd namespace=moby

failed to start daemon: Error initializing network controller: Error creating default "bridge" network: failed to check bridge interface existence: no buffer space available

Hi,

I generally have found Arch Linux to be a very stable system, considering how bleeding-edge it is, but Docker (installed from the official Arch Linux community repo), specifically the Docker daemon has been giving me this error on startup (i.e., this is the output of systemctl status docker):

● docker.service - Docker Application Container Engine

Loaded: loaded (/usr/lib/systemd/system/docker.service; enabled; vendor preset: disabled)

Active: failed (Result: exit-code) since Thu 2016-06-30 05:21:37 AEST; 1min 57s ago

Docs: https://docs.docker.com

Process: 923 ExecStart=/usr/bin/docker daemon -H fd:// (code=exited, status=1/FAILURE)

Main PID: 923 (code=exited, status=1/FAILURE)

Tasks: 0 (limit: 512)

Memory: 54.1M

CGroup: /system.slice/docker.service

Jun 30 05:21:33 fusion809-pc docker[923]: time="2016-06-30T05:21:33.675693871+10:00" level=warning msg="devmapper: Base device already exists and has filesystem xfs on it. User specified filesystem will be ignored."

Jun 30 05:21:33 fusion809-pc docker[923]: time="2016-06-30T05:21:33.704968079+10:00" level=info msg="[graphdriver] using prior storage driver "devicemapper""

Jun 30 05:21:34 fusion809-pc docker[923]: time="2016-06-30T05:21:34.595886267+10:00" level=info msg="Graph migration to content-addressability took 0.00 seconds"

Jun 30 05:21:34 fusion809-pc docker[923]: time="2016-06-30T05:21:34.802902289+10:00" level=info msg="Firewalld running: false"

Jun 30 05:21:35 fusion809-pc docker[923]: time="2016-06-30T05:21:35.291565827+10:00" level=info msg="Default bridge (docker0) is assigned with an IP address 172.17.0.0/16. Daemon option --bip can be used to set a preferred IP address"

Jun 30 05:21:37 fusion809-pc docker[923]: time="2016-06-30T05:21:37.600757783+10:00" level=fatal msg="Error starting daemon: Error initializing network controller: Error creating default "bridge" network: cannot create network f15440f7a6fb0f6045f006aee334c91e6e4317b6ae23c2a4e29ecc800ba5e34a (docker0): conflicts with network 44b54e0be7a2145be67a03fa2f38aadc75d92a7ca76f18a2a0a9a59cb12d8388 (docker0): networks have same bridge name"

Jun 30 05:21:37 fusion809-pc systemd[1]: docker.service: Main process exited, code=exited, status=1/FAILURE

Jun 30 05:21:37 fusion809-pc systemd[1]: Failed to start Docker Application Container Engine.

Jun 30 05:21:37 fusion809-pc systemd[1]: docker.service: Unit entered failed state.

Jun 30 05:21:37 fusion809-pc systemd[1]: docker.service: Failed with result 'exit-code'.I have run a Google Search for «network conflict docker» and the results I found (e.g., https://github.com/docker/machine/issues/1573, http://stackoverflow.com/questions/3125 … s-conflict, http://www.linuxjournal.com/content/con … etworking) seemed to apply to different situations where people were using docker-machine, boot2docker and non-Linux operating systems, namely Windows 7. I have looked at the documentation hoping to find something applicable to this but I can’t seem to find it. For example, I tried starting the Docker daemon with:

sudo docker daemon -b 172.17.0.1/16in the hope that a slightly different IP will rid myself of this conflict but it gave:

INFO[0000] previous instance of containerd still alive (9647)

WARN[0000] devmapper: Usage of loopback devices is strongly discouraged for production use. Please use `--storage-opt dm.thinpooldev` or use `man docker` to refer to dm.thinpooldev section.

WARN[0000] devmapper: Base device already exists and has filesystem xfs on it. User specified filesystem will be ignored.

INFO[0000] [graphdriver] using prior storage driver "devicemapper"

INFO[0000] Graph migration to content-addressability took 0.00 seconds

INFO[0000] Firewalld running: false

FATA[0001] Error starting daemon: Error initializing network controller: Error creating default "bridge" network: bridge device with non default name 172.17.0.1/16 must be created manuallyand I don’t know how to create a bridge manually. I have pastebined my journalctl -xe output if that helps, here is the link.

EDIT: I have tried re-installing Docker, and I have also tried re-installing Docker and its related packages (like runc and containerd). Each time I re-install Docker I have also ran

as root. My system is updated hourly using

, if you’re wondering whether it’s an outdated system issue.

Thanks for your time,

Brenton

Last edited by fusion809 (2016-06-29 20:34:16)

I have tried to install Docker to my RPI on Arch Linux and got the following error message, when try to start a new container.

FATA[0000] Error starting daemon: Error initializing network

controller: Error creating default «bridge» network: package not

installed

How can I run daemon?

fixer1234

26.8k61 gold badges72 silver badges115 bronze badges

asked Jul 30, 2015 at 17:59

5

Try rebooting. I read it’s necessary after installing bridge-utils. I had the same problem and rebooting did the trick for me.

answered Oct 30, 2015 at 2:04

Bug 557182

— app-emulation/docker failed with ‘Error creating default «bridge» network’

Summary:

app-emulation/docker failed with ‘Error creating default «bridge» network’

|

|

||||||||||||||||||||||||||||||||||||||||||||||

|

|

Note |