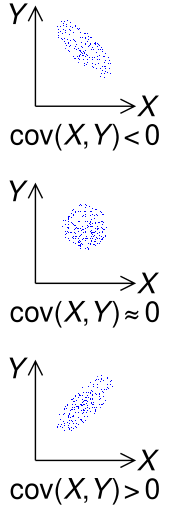

Sample points from a bivariate Gaussian distribution with a standard deviation of 3 in roughly the lower left–upper right direction and of 1 in the orthogonal direction. Because the x and y components co-vary, the variances of

In probability theory and statistics, a covariance matrix (also known as auto-covariance matrix, dispersion matrix, variance matrix, or variance–covariance matrix) is a square matrix giving the covariance between each pair of elements of a given random vector. Any covariance matrix is symmetric and positive semi-definite and its main diagonal contains variances (i.e., the covariance of each element with itself).

Intuitively, the covariance matrix generalizes the notion of variance to multiple dimensions. As an example, the variation in a collection of random points in two-dimensional space cannot be characterized fully by a single number, nor would the variances in the

The covariance matrix of a random vector

Definition[edit]

Throughout this article, boldfaced unsubscripted

If the entries in the column vector

are random variables, each with finite variance and expected value, then the covariance matrix

where the operator

Conflicting nomenclatures and notations[edit]

Nomenclatures differ. Some statisticians, following the probabilist William Feller in his two-volume book An Introduction to Probability Theory and Its Applications,[2] call the matrix

Both forms are quite standard, and there is no ambiguity between them. The matrix

By comparison, the notation for the cross-covariance matrix between two vectors is

Properties[edit]

Relation to the autocorrelation matrix[edit]

The auto-covariance matrix

where the autocorrelation matrix is defined as ![{displaystyle operatorname {R} _{mathbf {X} mathbf {X} }=operatorname {E} [mathbf {X} mathbf {X} ^{rm {T}}]}](https://wikimedia.org/api/rest_v1/media/math/render/svg/375369663d22bba80d770f6374289f95dd22cf63)

Relation to the correlation matrix[edit]

An entity closely related to the covariance matrix is the matrix of Pearson product-moment correlation coefficients between each of the random variables in the random vector

where

Equivalently, the correlation matrix can be seen as the covariance matrix of the standardized random variables

Each element on the principal diagonal of a correlation matrix is the correlation of a random variable with itself, which always equals 1. Each off-diagonal element is between −1 and +1 inclusive.

Inverse of the covariance matrix[edit]

The inverse of this matrix,

Just as the covariance matrix can be written as the rescaling of a correlation matrix by the marginal variances:

So, using the idea of partial correlation, and partial variance, the inverse covariance matrix can be expressed analogously:

This duality motivates a number of other dualities between marginalizing and conditioning for gaussian random variables.

Basic properties[edit]

For ![{displaystyle operatorname {K} _{mathbf {X} mathbf {X} }=operatorname {var} (mathbf {X} )=operatorname {E} left[left(mathbf {X} -operatorname {E} [mathbf {X} ]right)left(mathbf {X} -operatorname {E} [mathbf {X} ]right)^{rm {T}}right]}](https://wikimedia.org/api/rest_v1/media/math/render/svg/bed55fb51d1aad5b83b37076bdbd9ad0177a813b)

![{displaystyle mathbf {mu _{X}} =operatorname {E} [{textbf {X}}]}](https://wikimedia.org/api/rest_v1/media/math/render/svg/25987aa171cbd6ec023a92eb06b6ee750de39309)

is positive-semidefinite, i.e.

is symmetric, i.e.

- For any constant (i.e. non-random)

matrix

and constant

vector

, one has

- If

is another random vector with the same dimension as

, then

where

is the cross-covariance matrix of

and

.

Block matrices[edit]

The joint mean

where

If

then the conditional distribution for

[5]

defined by conditional mean

and conditional variance

The matrix

The matrix of regression coefficients may often be given in transpose form,

Partial covariance matrix[edit]

A covariance matrix with all non-zero elements tells us that all the individual random variables are interrelated. This means that the variables are not only directly correlated, but also correlated via other variables indirectly. Often such indirect, common-mode correlations are trivial and uninteresting. They can be suppressed by calculating the partial covariance matrix, that is the part of covariance matrix that shows only the interesting part of correlations.

If two vectors of random variables

The partial covariance matrix

Covariance matrix as a parameter of a distribution[edit]

If a column vector

where ![{displaystyle mathbf {mu =operatorname {E} [X]} }](https://wikimedia.org/api/rest_v1/media/math/render/svg/d9c821aa85a1cbc2edfffb9343c51c7cd3541b34)

Covariance matrix as a linear operator[edit]

Applied to one vector, the covariance matrix maps a linear combination c of the random variables X onto a vector of covariances with those variables:

Similarly, the (pseudo-)inverse covariance matrix provides an inner product

Which matrices are covariance matrices?[edit]

From the identity just above, let

which must always be nonnegative, since it is the variance of a real-valued random variable, so a covariance matrix is always a positive-semidefinite matrix.

The above argument can be expanded as follows:

where the last inequality follows from the observation that ![{displaystyle w^{rm {T}}(mathbf {X} -operatorname {E} [mathbf {X} ])}](https://wikimedia.org/api/rest_v1/media/math/render/svg/127bb7293f5d9738d76b0c9461d4236befd8dccb)

Conversely, every symmetric positive semi-definite matrix is a covariance matrix. To see this, suppose

Complex random vectors[edit]

The variance of a complex scalar-valued random variable with expected value

where the complex conjugate of a complex number

If

,

The matrix so obtained will be Hermitian positive-semidefinite,[8] with real numbers in the main diagonal and complex numbers off-diagonal.

- Properties

- The covariance matrix is a Hermitian matrix, i.e.

.[1]: p. 179

- The diagonal elements of the covariance matrix are real.[1]: p. 179

Pseudo-covariance matrix[edit]

For complex random vectors, another kind of second central moment, the pseudo-covariance matrix (also called relation matrix) is defined as follows:

In contrast to the covariance matrix defined above, Hermitian transposition gets replaced by transposition in the definition.

Its diagonal elements may be complex valued; it is a complex symmetric matrix.

Estimation[edit]

If

or, if the row means were known a priori,

These empirical sample covariance matrices are the most straightforward and most often used estimators for the covariance matrices, but other estimators also exist, including regularised or shrinkage estimators, which may have better properties.

Applications[edit]

The covariance matrix is a useful tool in many different areas. From it a transformation matrix can be derived, called a whitening transformation, that allows one to completely decorrelate the data[citation needed] or, from a different point of view, to find an optimal basis for representing the data in a compact way[citation needed] (see Rayleigh quotient for a formal proof and additional properties of covariance matrices).

This is called principal component analysis (PCA) and the Karhunen–Loève transform (KL-transform).

The covariance matrix plays a key role in financial economics, especially in portfolio theory and its mutual fund separation theorem and in the capital asset pricing model. The matrix of covariances among various assets’ returns is used to determine, under certain assumptions, the relative amounts of different assets that investors should (in a normative analysis) or are predicted to (in a positive analysis) choose to hold in a context of diversification.

Use in optimization[edit]

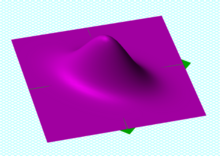

The evolution strategy, a particular family of Randomized Search Heuristics, fundamentally relies on a covariance matrix in its mechanism. The characteristic mutation operator draws the update step from a multivariate normal distribution using an evolving covariance matrix. There is a formal proof that the evolution strategy’s covariance matrix adapts to the inverse of the Hessian matrix of the search landscape, up to a scalar factor and small random fluctuations (proven for a single-parent strategy and a static model, as the population size increases, relying on the quadratic approximation).[9]

Intuitively, this result is supported by the rationale that the optimal covariance distribution can offer mutation steps whose equidensity probability contours match the level sets of the landscape, and so they maximize the progress rate.

Covariance mapping[edit]

In covariance mapping the values of the

In practice the column vectors

where

and the covariance matrix is estimated by the sample covariance matrix

where the angular brackets denote sample averaging as before except that the Bessel’s correction should be made to avoid bias. Using this estimation the partial covariance matrix can be calculated as

where the backslash denotes the left matrix division operator, which bypasses the requirement to invert a matrix and is available in some computational packages such as Matlab.[10]

Figure 1: Construction of a partial covariance map of N2 molecules undergoing Coulomb explosion induced by a free-electron laser.[11] Panels a and b map the two terms of the covariance matrix, which is shown in panel c. Panel d maps common-mode correlations via intensity fluctuations of the laser. Panel e maps the partial covariance matrix that is corrected for the intensity fluctuations. Panel f shows that 10% overcorrection improves the map and makes ion-ion correlations clearly visible. Owing to momentum conservation these correlations appear as lines approximately perpendicular to the autocorrelation line (and to the periodic modulations which are caused by detector ringing).

Fig. 1 illustrates how a partial covariance map is constructed on an example of an experiment performed at the FLASH free-electron laser in Hamburg.[11] The random function

In the example of Fig. 1 spectra

Two-dimensional infrared spectroscopy[edit]

Two-dimensional infrared spectroscopy employs correlation analysis to obtain 2D spectra of the condensed phase. There are two versions of this analysis: synchronous and asynchronous. Mathematically, the former is expressed in terms of the sample covariance matrix and the technique is equivalent to covariance mapping.[12]

See also[edit]

- Covariance function

- Multivariate statistics

- Lewandowski-Kurowicka-Joe distribution

- Gramian matrix

- Eigenvalue decomposition

- Quadratic form (statistics)

- Principal components

References[edit]

- ^ a b c Park,Kun Il (2018). Fundamentals of Probability and Stochastic Processes with Applications to Communications. Springer. ISBN 978-3-319-68074-3.

- ^ William Feller (1971). An introduction to probability theory and its applications. Wiley. ISBN 978-0-471-25709-7. Retrieved 10 August 2012.

- ^ Wasserman, Larry (2004). All of Statistics: A Concise Course in Statistical Inference. ISBN 0-387-40272-1.

- ^ Taboga, Marco (2010). «Lectures on probability theory and mathematical statistics».

- ^ Eaton, Morris L. (1983). Multivariate Statistics: a Vector Space Approach. John Wiley and Sons. pp. 116–117. ISBN 0-471-02776-6.

- ^ a b W J Krzanowski «Principles of Multivariate Analysis» (Oxford University Press, New York, 1988), Chap. 14.4; K V Mardia, J T Kent and J M Bibby «Multivariate Analysis (Academic Press, London, 1997), Chap. 6.5.3; T W Anderson «An Introduction to Multivariate Statistical Analysis» (Wiley, New York, 2003), 3rd ed., Chaps. 2.5.1 and 4.3.1.

- ^ Lapidoth, Amos (2009). A Foundation in Digital Communication. Cambridge University Press. ISBN 978-0-521-19395-5.

- ^ Brookes, Mike. «The Matrix Reference Manual».

- ^ Shir, O.M.; A. Yehudayoff (2020). «On the covariance-Hessian relation in evolution strategies». Theoretical Computer Science. Elsevier. 801: 157–174. doi:10.1016/j.tcs.2019.09.002.

- ^ L J Frasinski «Covariance mapping techniques» J. Phys. B: At. Mol. Opt. Phys. 49 152004 (2016), open access

- ^ a b O Kornilov, M Eckstein, M Rosenblatt, C P Schulz, K Motomura, A Rouzée, J Klei, L Foucar, M Siano, A Lübcke, F. Schapper, P Johnsson, D M P Holland, T Schlatholter, T Marchenko, S Düsterer, K Ueda, M J J Vrakking and L J Frasinski «Coulomb explosion of diatomic molecules in intense XUV fields mapped by partial covariance» J. Phys. B: At. Mol. Opt. Phys. 46 164028 (2013), open access

- ^ I Noda «Generalized two-dimensional correlation method applicable to infrared, Raman, and other types of spectroscopy» Appl. Spectrosc. 47 1329–36 (1993)

Further reading[edit]

- «Covariance matrix», Encyclopedia of Mathematics, EMS Press, 2001 [1994]

- «Covariance Matrix Explained With Pictures», an easy way to visualize covariance matrices!

- Weisstein, Eric W. «Covariance Matrix». MathWorld.

- van Kampen, N. G. (1981). Stochastic processes in physics and chemistry. New York: North-Holland. ISBN 0-444-86200-5.

| About |

| Home |

| RO method |

| News Archive |

| Contact |

| Search |

| Documentation |

| Product Documents |

| Publications |

| ROM SAF Reports |

| Visiting Scientist |

| User Workshops |

| Data & Software |

| Product Archive |

| Product Quality |

| Browse Occultations |

| NRT Monitoring |

| Climate Monitoring |

| Software |

| User Service |

| Helpdesk |

| Helpdesk History |

| RSS Feeds |

| Registration |

| Login |

Error Covariance Matrices

The error covariance matrix (ECM) is a dataset that specifies the correlations in the observation errors between all possible pairs of vertical levels. It is given as a two-dimensional array, of size NxN , where N is the number of vertical levels in the sounding data products.

Specifically, the ECM for variable X

is given by E[(X

— E[X])(X — E[X])T],

where in practice the expectation value is estimated by an average

over a representative sample. It is a matrix because levels

are treated independently: X

is a vector of length equal to the number of vertical levels.

X could be an observation (refractivity N or bending angle α), in which case the ECM is sometimes called O,

or a

background state ({T, q, p*} for ECMWF-like models; {p, q} for Met

Office-like models), in which case the ECM is sometimes called B. Both need to be specified in the minimisation of the usual 1dvar cost function

J(x) = ½(x-b)TB-1(x-b) + ½(H(x)-y)TO-1(H(x)-y))

which is needed to produce a retrieved analysis x.

ECMs are positive semi-definite matrices which can be factorised as

ECM = DT C D

where the diagonal matrix D comprises the standard deviations of X, and defines the «size» of the variance, while the correlation matrix C describes the «shape» of the variance of X. By definition of standard deviation, the diagonal terms of C are all equal to 1.

The ROPP user has a choice of whether to use fixed standard deviations D,

read from an auxiliary covariance file, or to allow them to vary

profile-by-profile, by including them in the observation or background

file. At present, correlations C can only be read from the same auxiliary file. It is the content of these auxiliary covariance files which is described here.

In ROPP, the correlations in the covariance files are stored in packed,

«triangular» format, so that successive elements of the variable «corr»

hold the following matrix elements:

| 1 | 2 | 4 | 7 | … |

| 3 | 5 | 8 | … | |

| 6 | 9 | … | ||

| 10 | … | |||

| … |

By exploiting the symmetry of the correlation coefficients, only ½N(N+1) of them need to be stored.

The correlation matrices can be binned (in the same file) according to

latitude. In principle this could be extended to seasonality. Standard

deviations can also be held in the same file. These, rather than the

profile-by-profile SDs, will be used in the retrieval if

bg/obs_covar_method = FSFC or FSDC.

Further details on all the above can be found in the ROPP FM and 1DVAR User Guide.

Below, there are error-covariance matrices for the following:

- Covariance matrices for NRT products

- Covariance matrices for Reprocessed climate data records

- Other covariance matrices (included in ROPP)

Each matrix can be provided in several versions reflecting variations with geographical areas (e.g. latitude) and with season.

Covariance matrices for NRT products

| Description | Image (click to expand) |

File |

| Background

Suitable for 137L ECMWF background state. Currently, the T and p* standard deviations are the ones The block diagonal structure of correlation matrix arises |

|

ropp_bg_ecmwf_error_corr_L60.nc

ropp_bg_ses_ecmwf_error_corr_L137.nc |

| ObservationsSuitable for 247L refractivity retrievals. (Bending angle correlation matrices are usually assumed to be diagonal.) Note that there is a minimum σ(N) of 0.02 N-units above |

|

ropp_ob_refrac_error_corr_247L.nc |

Covariance matrices for Reprocessed climate data records

| Description | Image (click to expand) |

File |

| Background

Suitable for 60 L ERA-I background profiles. Average of |

|

ropp_bg_ecmwf_error_corr_L60.nc

bg_ef_ei_error_corr_sigma_PsBias_LatB5d_L60.nc |

Other covariance matrices (included in ROPP)

Some example ECMs are available in the ropp_1dvar/errors directory of the ROPP distribution, for the benefit of the users. They are described below.

| Description | Image (click to expand) |

File |

| Background Suitable for (early) 60L ECMWF background state {T, q, p*}. Note that correlation matrix assumes level 1 at the top of the atmosphere. |

|

ropp_bg_ecmwf_error_corr_L60.nc |

| Background Suitable for (current) 91L ECMWF background state {T, q, p*}. Note that correlation matrix assumes level 1 at the bottom of the atmosphere. |

|

ropp_bg_ecmwf_error_corr_L91.nc |

| BackgroundSuitable for (early) 50L Met Office background state {p, q}. Three latitude bands: -90 to -20, -20 to 20, 20 to 90N. |

|

ropp_bg_meto_error_corr_L50.nc |

| BackgroundSuitable for (current) 70L Met Office background state {p, q}. Three latitude bands: -90 to -20, -20 to 20, 20 to 90N. |

|

ropp_bg_meto_error_corr_L70.nc |

| Observation

Suitable for 300L bending angles. (Note that the correlation matrix C is the identity matrix). |

|

ropp_ob_bangle_error_corr.nc |

| Observation

Suitable for standard 247L refractivities. |

|

ropp_ob_refrac_error_corr.nc |

|

EUMETSAT ROM SAF licence

|

|

|

|

|

Copyright © 1999-2023 EUMETSAT

|

Battery state-of-power evaluation methods

Shunli Wang, … Zonghai Chen, in Battery System Modeling, 2021

7.4.4 Main charge-discharge condition test

The initial value of the error covariance matrix P0 can be determined from the X0 error of the initial state. In the application, the initial value should be as small as possible to speed up the tracking speed of the algorithm. Two important parameters are processed noise variance matrix Q and observation noise variance matrix R. As can be known from theoretical formula derivation, the dispose to Q and R plays a key role in improving the estimation effect by using this algorithm. Because it affects the size of the Kalman gain matrix K directly, the value of error covariance matrix P can be described as shown in Eq. (7.61):

(7.61)Rk=Eυk2=συ2

It is the expression of the observation noise variance, which is mainly derived from the distribution of observation error of experimental instruments and sensors, as shown in Eq. (7.62):

(7.62)Qk=Eω1k2Eω1kω2kEω2kω1kEω2k2=σω1,12σω1,22σω2,12σω2,22

It is the relationship between the system noise variance and the processing noise covariance. The two states in the system are generally unrelated and the covariance value is zero. Therefore, the diagonal variance can have a small value. The variance Q of processing noise is mainly derived from the error of the established equivalent model, which is difficult to be obtained by theoretical methods or means. A reasonable value range can be obtained through continuous debugging through simulation, and it is usually a small amount.

To verify the applicable range and stability of the algorithm, the input of different working conditions is used to observe the estimation accuracy of the algorithm. In this simulation, two working conditions are used: the constant-current working condition and the Beijing bus dynamic street test working condition. For the constant-current condition, only a constant value needs to be set in the program. To increase the complexity of the condition, two shelved stages are added to the constant-current input list to verify the tracking effect of Ah and the extended Kalman filtering. The following code is generated for the current.

The experimental data should be used to simulate the algorithm under its operating conditions. The current input module is realized in the environment under Beijing bus dynamic street test operating conditions. It is the time integration module, whose output is the capacity change of the working condition, and the lower part is the working condition data output module. It outputs the current into the workspace at the minimum time interval of the sampling measurement. The data onto the workspace are a time-series type and the current data need to be extracted. The data extraction and transformation can be performed in the main program, wherein the working condition is the Simulink module. The current is the time-series data, including the current output. S_Est is the algorithm program module, whose input is the initial S_Est_init and current data onto the estimated state value.

The current data are input into the program module of the extended Kalman filtering algorithm based on the model, and the initial values of other parameters have been determined in the program. The program finally outputs the ampere hour time integral and the state estimation curve of the extended Kalman filtering through the minimum time interval of 10 times as the sampling time. The current curve of the experimental test can be obtained as shown in Fig. 7.13.

Fig. 7.13. Pulse current operating current curve for the experimental test. (A) Main charge working condition. (B) Main discharge experimental test.

The unscented Kalman filtering algorithm is programmed by writing scripts to realize algorithm simulation to compare the output effect. The specific implementation process conforms to the processing requirements, and the main simulation program can be constructed. The program takes 10 times the minimum time interval as the sampling time and simulates the three methods of the state estimation, including ampere hour time integration, extended Kalman filtering, and unscented Kalman filtering, to compare the following situation of the three methods of the state over time. The program simultaneously outputs the state variation curve and estimation error curve with time, so that the tracking effect and error variation on the three methods can be obtained visually. Its implementation process is consistent with script. Different modules are used to replace the code block. The integration advantage of the environment is used in the graphical interface to obtain the same stimulation effect.

The operation logic is ensured by the unchanged intuitive presentation of the calculation process. Based on script implementation, the modular simulation is built accordingly. The experiment data are used as the input current, and the state prediction is obtained by the state prediction module. The forecast and model parameters are calculated. After that, the equivalent model can be used to predict the completion status value of Up as well as the output voltage. The input of the algorithm includes the update module, output state correction, and error covariance matrix update as the basis of the forecast. It takes the ampere hour integral result as the minimum time interval of the current data, which is taken as the reference value of the measured state.

The estimation effect of ampere hour and extended Kalman filtering is compared when the minimum time interval can be set as 10 times as the sampling period. The stability of the extended Kalman filtering algorithm is evaluated under working conditions. The state variable needs to accept the last estimated value as the basis of the next state prediction, in which the predicted value Up_pre is directly input into the corresponding position of the resistance-capacitance circuit modeling to conduct the terminal voltage prediction of the current polarization voltage. The functions of each part are realized in a more modular way for the complete structure and clear hierarchy.

Read full chapter

URL:

https://www.sciencedirect.com/science/article/pii/B9780323904728000044

Dynamic Model Development

P.W. Oxby, … T.A. Duever, in Computer Aided Chemical Engineering, 2003

3.2 An Optimality Property of the Determinant Criterion

Equation (12) can be generalized by replacing the inverse of the estimate of the error covariance matrix,

Σ^εn−1, with an arbitrary symmetric positive definite weight matrix, W:

(14)Δθ^(k)=(XTWX)−1XTWz(θ^(k))

The estimate of the parameter covariance is:

(15)Σ^θ=(XTWX)−1XTWΣ^εn(XTWX)−1

If the determinant of the estimate of the parameter covariance matrix,

|Σ^θ|, is minimized with respect to the elements of W, the solution is independent of X and is simply

(16)W=Σ^εn−1

Substitution of (16) back into (14) gives (12) which, as has been shown, is equivalent to the determinant criterion. Therefore of all weighting schemes in the form of (14), it is the one equivalent to the determinant criterion that minimizes

|Σ^θ|, the determinant of the estimate of the parameter covariance matrix when the error covariance is unknown. This appears to be a rather strong result in support of the determinant criterion. It might also be noted that, unlike derivations based on likelihood, this result does not depend on the measurement errors being normally distributed. But it will be shown that the practical usefulness of the result does depend on the assumption that the residual covariance

Z(θ^)Z(θ^)T/n, is a good estimate of the true error covariance. Ideally one would want W to be the inverse of the error covariance matrix. The determinant in effect makes a compromise and substitutes an estimate of it. The potential problem here is that if the data set is not large, the residual covariance matrix may be a poor estimate of the error covariance matrix. A poor estimate of the error covariance matrix will lead to a poor estimate of the parameter covariance matrix. Therefore, although the determinant criterion gives the minimum determinant of the estimate of the parameter covariance matrix, if this estimate is poor, then the optimality property may be of little significance. This suggests that the optimality of the determinant criterion may be more relevant for large data sets than small ones. The simulation studies presented in Section 4 will confirm this to be true.

Read full chapter

URL:

https://www.sciencedirect.com/science/article/pii/S1570794603800706

Kalman Filters and Nonlinear Filters

Vytas B. Gylys, in Encyclopedia of Physical Science and Technology (Third Edition), 2003

IV.B Square Root Filtering

By far the greatest trouble spot in computer mechanization of the KF is the updating of state error covariance matrix P, that is, the computation of Pk∣k according to Eq. (18). As the estimation process progresses, the elements of Pk∣k typically continue to decrease in magnitude and so matrix Pk∣k keeps approaching the zero matrix, although theoretically it should forever remain positive definite, no matter how small in magnitude its elements become. Hence, unless special measures are taken, accumulation of roundoff error in the repetitive use of Eq. (18) may cause the computed Pk∣k to lose its positive definiteness. As suggested by the matrix inversion operation appearing on the right-hand side of Eq. (16) for computing the Kalman gain, this situation is aggravated if several components of the measurement vector are very accurate and consequently the positive definite measurement error covariance matrix R is ill conditioned, that is, if R has eigenvalues of both relatively very large and small magnitudes.

Let A be a nonnegative definite symmetric matrix; then there exists a matrix S such that A = SST. Matrix S is often called the square root of A. The Cholesky decomposition algorithm provides a method of constructing from A the matrix S so that S is lower triangular; that is, all elements of S above the main diagonal are zero. Square root filtering is motivated by the observations that, if the state error covariance matrix P = SST, then (a) since SST is always nonnegative definite, matrix P expressed as SST cannot become negative definite, and (b) matrix S is generally less ill conditioned than matrix P.

Several versions of the square root filtering algorithm are known. The earliest form was developed by J. E. Potter in 1964 for applications in which the process noise is absent (i.e., covariance matrix Q is zero) and the measurements are sequentially processed as scalars. In 1967 J. F. Bellantoni and K. W. Dodge extended Potter’s results to vector-valued measurements. A. Andrews in 1968 and then S. F. Schmidt in 1970 published two alternative procedures for handling the process noise. In 1973 N. A. Carlson described a procedure that considerably improved the speed and decreased the memory requirements of square root filtering and in which, as in Potter’s algorithm, vector-valued measurements are processed sequentially as scalars. Finally, the so-called UDUT covariance factorization method is the most recent major milestone in numerical handling of KFs. This method, developed by G. J. Bierman and C. L. Thornton, represents the state error covariances before and after the measurement update step asPk|k−1=Uk|k−1Dk|k−1Uk|k−1TandPk|k=Uk|kDk|kUk|kT,with D being a diagonal matrix and U an upper triangular matrix with 1’s on its main diagonal. In this method, the square root of the covariance matrix, which now would correspond to UD1/2, is never computed explicitly, which avoids numerical computation of square roots. Like Carlson’s algorithm, the UDUT factorization method maintains the covariance matrix in factored form and so (like Carlson’s algorithm) is considerably more efficient in processor time and storage than the original Potter algorithm.

As a quick comparison of computational efficiency, the conventional Kalman method, the less efficient form of Carlson’s algorithm, and the UDUT factorization method are roughly equal: The processing of each time step (consisting of one time propagation and one measurement update) requires of the order of 16[9ns3 + 9ns2nm + 3ns2nw] adds and about the same number of multiplies, plus a relatively modest number of divides and square roots (square roots are required only in some, as in Potter’s or Carlson’s square root algorithms). Here, as before, ns is the length of the state vector, nm the length of the measurement vector, and nw the lenght of the process noise vector w. The faster version of Carlson’s algorithm is more efficient and requires only of the order of 16[5ns3 + 9ns2nm + 3ns2nw] adds and 16[5ns3 + 12ns2nm + 3ns2nw] multiplies, plus 2nsnm divides and nsnm square roots, at each time point. The stable (Joseph) form of the KF [as given by Eq. (18′)] fares more poorly: At each time step, it requires of the order of 16[18ns3 + 15ns2nm + 3ns2nw] adds and about the same number of multiplies.

As a summary, (a) a square root filter is a numerically stable form for performing the KF covariance–gain processing defined by Eqs. (15), (16), and (18); (b) the efficiency of its more recent versions roughly compares with that of these three equations; (c) the increased stability allows one to use relatively low-precision arithmetic in the KF gain–covariance processing, with a possible exception of some dot products.

Real-time implementation of a filter involves additional issues that are unimportant in the non-real-time environment. Besides the adequacy of functional performance, the most important of these issues is the requirement to produce timely responses to external stimuli. Thus, resorting to a parallel or concurrent processing may be the only way out. This usually implies the use of special hardware architectures such as parallel, vector pipelined, or systolic processors.

As one example, consider the use of a filter in the tracking of multiple objects in a hard real-time environment characterized by strict deadlines. In such a case one may want to maintain simultaneously many estimation processes, each handling a single object. Parallel processors may seem to be a suitable hardware architecture for this problem, but if separate estimation processes in such an application progress at different rates and at any time some of them require a great amount of special handling, then parallel architecture, such as a single-instruction multiple-data stream computer, may not be the best choice. As another example, consider a KF to be implemented as part of a navigation system on a small airborne computer (uniprocessor). Suppose that the navigation measurements come at a certain fixed rate. If the filtering process cannot keep up with the arrival rate of measurements and so not all of them can be utilized, the estimation performance may deteriorate. In this problem, if there is an upper bound on hardware resources, the only solution may be to decompose the estimation algorithm into concurrently executable processes. For instance, the time-propagation step (which, say, is to be executed at a relatively high rate) may constitute one process and the measurement-update step (which needs to be executed only at some lower rate, say, at the rate of measurement arrivals) may constitute another. Such a decomposition of an estimation algorithm into concurrent procedures often creates a surrogate algorithm that performs more poorly than the original algorithm.

The effects of the finite-length word computing is another issue that must be considered in filter implementation for real-time applications. The computer on which a filter is developed and validated through successive off-line simulations is often more powerful and uses higher-precision arithmetic and number representations than the ultimate real-time processor. Hence, one must in advance determine in advance what effect a shorter word length will have on performance.

Read full chapter

URL:

https://www.sciencedirect.com/science/article/pii/B0122274105003574

Computer Techniques and Algorithms in Digital Signal Processing

K. Giridhar, … Ronald A. Iltis, in Control and Dynamic Systems, 1996

A Appendix – Time Updates for the Blind MAPSD Algorithm

In this Appendix, we derive the one-step time updates of the channel estimate and the associated error covariance matrix for the blind MAP symbol detector developed in Section 4.2. Recall from Eq. (51) that

(A.1)pfk+1|dik+1,Nf,rk=∑j:djk,Nf∈dik+1,Nfαjp(fk+1|djk,Nf,rk)

where αj is given by Eq. (52). Using Eq. (49), this Gaussian sum can be explicitly written as follows:

(A.2)pfk+1|dik+1,Nf,rk=∑j:djk,Nf∈dik+1,NfαjNfk+1;Ff^jk|k,FPjk|kFH+Q

which defines the one-step prediction of the mean vector f^ik+1|k and the covariance matrix Pik+1|k. Some basic results about sums of Gaussian p.d.f.s can be used to derive these one-step predictions.

Lemma: Let the p.d.f. of the random vector x be a weighted sum of N multivariate Gaussian p.d.f.s as follows:

(A.3)px=∑j=1NαjNx;xj,Pj

where αj , xj, and Pj are, respectively, the jth weight, mean, and covariance. (i) The mean value of x is given by

(A.4)xa=∑j=1Nαjxj.

(ii) The error covariance is given by

(A.5)Pa=∑j=1NαjPj+xa–xjxa–xjH.

Proof: The first part of the lemma is easily proved from the definition of xa, i.e.,

(A.6)xa=Ex=∫xx∑j=1NαjNx;xj,Pjdx=∑j=1Nαjxj.

To prove the second part of the lemma, consider

(A.7)Pa=Ex−xax−xaH=∑j=1Nαj∫xxxHNjdx+xaxaH−∫xxxaHNjdx−∫xxaxHNjdx

where, for convenience, we have used the notation Nj to represent the p.d.f. N(x; xj, Pĵ). Observe that ∫xxaHNjdx=xjxaHand∫xaxHNjdx=xaxjH. By adding and subtracting the term xjxjH to the expression inside the square brackets, we obtain

(A.8)Pa=∑j=1Nαj∫xxxHNjdx−xjxjH+xaxaH−xjxaH−xaxjH+xjxjH=∑j=1Nαj∫xxxHNjdx−xjxjH+xa−xjxa−xjH..

It is straightforward to show that

(A.9)∑j=1Nαj∫xxxHNjdx−xjxjH=∑j=1Nαj∫xx−xj(x−xj)HNjdx=∑j=1NαjPj.

Substituting this result into Eq. (A.8 ), we obtain the result in Eq. (A.5 ) for the error covariance Pa, which completes the proof.

To obtain the one-step predicted mean vector of the blind MAPSD algorithm, Eq. (A.4 ) is used with xj=Ff^jk|k. The summation is performed over the M predecessor subsequences djk,Nf, yielding

(A.10)f^ik+1|k)=∑j:djk,Nf∈dik+1,NfαjFf^jk|k.

By substituting αj=pdjk,Nf|rk−1/qik, the final expression in Eq. (53) is obtained. Similarly, the one-step error covariance update in Eq. (54) is obtained by substituting FPjk|kFH+Q=Pj and

(A.11)νj,ikνj,iHk=xa−xjxa−xjH

into Eq. (A.5 ) where xa=f^ik+1|k (and xj=Ff^jk|k as above).

Read full chapter

URL:

https://www.sciencedirect.com/science/article/pii/S0090526796800454

State Estimation

Jay Farrell, in The Electrical Engineering Handbook, 2005

4.3 Recursive State Estimation

Equations 4.4 through 4.5 provide the mean and the covariance of the state through time based only on the initial mean state vector and its error covariance matrix. When measurements y(k) are available, the measurements can be used to improve the accuracy of an estimate of the state vector at time k. The symbols xˆ−(k) and xˆ+(k) are used to denote the estimate of x(k) before and after incorporating the measurement, respectively.

Similarly, the symbols Pxˆ−(k) and Pxˆ+(k) are used to denote the error covariance matrices corresponding to xˆ−(k) and xˆ+(k), respectively. This section presents the time propagation and measurement update equations for both the state estimate and its error covariance. The equations are presented in a form that is valid for any linear unbiased measurement correction. These equations contain a gain matrix K that determines the estimator performance. The choice of K to minimize the error covariance Pxˆ+(k) will be of interest.

For an unbiased linear measurement, the update will have the form:

(4.8)xˆ+(k)=xˆ−(k)+K(k)(y(k)−yˆ−(k)),

where yˆ−(k)=H(k)xˆ−(k).. The error covariance of xˆ+(k) is the following:

(4.9)Pxˆ+(k)=(I−K(k)H(k))Pxˆ−(k)(I−K(k)H(k))T +K(k)R(k)KT(k).

K(k) is a possibly time-varying state estimation gain vector to be designed. If no measurement is available at time k, then K(k) = 0, which yields xˆ+(k)=xˆ−(k) and Pxˆ+(k)=Pxˆ−(k). If a measurement is available, and the state estimator is designed well, then Pxˆ+(k)≤Pxˆ−(k). In either case, the time propagation of the state estimate and its error covariance matrix is achieved by:

(4.10)xˆ−(k+1)=Φ(k)xˆ+(k)+Γˆuu(k)

(4.11)Pxˆ−(k+1)=Φ(k)Pxˆ+(k)ΦT(k)+ΓωQd(k)ΓωT.

At least two issues are of interest relative to the state estimation problem. First, does there exist a state estimation gain vector K(k) such that xˆ is guaranteed to converge to x regardless of initial condition and the sequence u(k)? Second, how should the designer select the gain vector K(k)?

The first issue raises the question of observability. A linear time-invariant system is observable if the following matrix has rank n:

[HT, ΦTHT, …,( ΦT)nHT].

When a system is observable, then it is guaranteed that a stabilizing gain vector K exists. Assuming that the system of interest is observable, the remainder of this chapter discusses the design and analysis of state estimators.

Figure 4.1 portrays the state estimator in conjunction with the system of interest. The system of interest is depicted in the upper left. The state estimator is superimposed on a gray background in the lower right.

FIGURE 4.1. State Estimation System Block Diagram

This interconnected system will be referred to as the state estimation system. The figure motivates several important comments. First, although the state estimator has only n states, the state estimation system has 2n states. Second, the inputs to the state estimation system are the deterministic input u and the stochastic inputs ω and v. Third, the inputs to the state estimator are the deterministic input u and the measured plant output y. The state space model for the state estimation system is the following:

(4.12)[x(k+1)xˆ−(k+1)]=[Φx0LHxΦ−LH][x(k)xˆ−(k)]+[ΓuΓω0Γˆu0L][u(k)ω(k)v(k)],

where L = ΦK.

Based on this state-space model, with the assumption that the system is time invariant, the transfer function from v to yˆ is as written here:

(4.13)Gv(z)=H[zI−(Φ−LH)]−1L,

where z is the discrete-time unit advance operator. Assuming that H = Hx, the transfer function from u to r is as follows:

(4.14)Gu(z)=H[zI−(Φ−LH)]−1 [(zI−Φ)(zI−Φx)−1Γu−(zI−Φx)(zI−Φx)−1Γˆu].

Therefore, if Γu=Γˆu and Φx = Φ, then this transfer function is identically zero. Assuming again that H = Hx, the transfer function from ω to r is the following:

(4.15)Gω(z)=H[zI−(Φ−LH)]−1[zI−Φ][zI−Φx]−1Γω.

In the special case where, in addition, Φx = Φ, the transfer function Gω(z) has n identical poles and zeros. This transfer functions is often stated as:

(4.16)Gω(z)=H[zI−(Φ−LH)]−1Γω,

where n pole and zero cancellations have occurred. These pole-zero cancellations and therefore the validity of equation 4.16 are dependent on the exact modeling assumption and the stability of the canceled poles.

Read full chapter

URL:

https://www.sciencedirect.com/science/article/pii/B9780121709600500815

Data Assimilation in Numerical Weather Prediction and Sample Applications

Andrew S. Jones, Steven J. Fletcher, in Solar Energy Forecasting and Resource Assessment, 2013

13.3.5 Ensemble DA

The ensemble Kalman filter (EnsKF) is a sequential filter that forecasts the state vector, xf, as well as the model-error covariance matrix, Pf, toward a future time step (Evensen, 1994). This is a linear process, but it can employ nonlinear models within the system; no adjoints are required. For example, the forward state is given by the propagation of the model forward in time

(13.29)xf(ti)=Mi−1[xa(ti−1)]

as well as its associated forecast-error covariance matrix

(13.30)Pf(ti)=Mi−1Pa(ti−1)Mi−1T+Q(ti−1)

This is followed by an analysis step that updates (or readjusts) the state information and the forecast-error covariance information

(13.31)xa(ti)=xf(ti)+Kidi

(13.32)Pa(ti)=(I−KiHi)Pf(ti)

where the innovation vector, di, is given by

(13.33)di=yio−Hi[xf(ti)]

It is important to note that M and H are linearizations of the gradients of M and H with respect to the control vector, x. The Kalman gain, Ki, is given by

(13.34)Ki=Pf(ti)HiT[HiPf(ti)HiT+Ri]−1

where Pf(ti) is now approximated by the mean ensemble estimate

(13.35)Pf(ti)≈1K−2∑K≠lK[xf(tk)−x¯f(tl)][xf(tk)−x¯f(tl)]T

K is the number of ensemble-model runs required to generate the estimate, and a reference model state, l, is used to define the mean ensemble estimate of the forecast error covariance matrix. In addition to the approach in equation 13.35, other EnsKF variants are used by the NWP DA community. The analysis stage that propagates the forecast error covariance matrix is a powerful feature of EnsKF. Additional improvements in EnsKF performance can be achieved by improving sampling behaviors—for example, using sampling strategies and square root schemes, some of which also allow for a low-rank representation of the observational-error covariance matrix (Evensen, 2004).

Read full chapter

URL:

https://www.sciencedirect.com/science/article/pii/B9780123971777000139

The Basics of Analytical Mechanics, Optimization, Control and Estimation

Kyle T. Alfriend, … Louis S. Breger, in Spacecraft Formation Flying, 2010

3.8 Kalman Filtering

In 1960, Robert Kalman introduced a new approach for minimum mean-square error filtering that used state-space methods [80]. The Kalman Filter (KF) is a recursive scheme that propagates a current estimate of a state and the error covariance matrix of that state forward in time. The filter optimally blends the new information introduced by the measurements with old information embodied in the prior state with a Kalman gain matrix. The gain matrix balances uncertainty in the measurements with the uncertainty in the dynamics model. The KF is guaranteed to be the optimal filter (in the sense of minimizing the 2-norm-squared of the estimation error) for a linear system with linear measurements [81]. However, few systems can be accurately modeled with linear dynamics. Shortly after its inception, improvements on the Kalman filter to handle nonlinear systems were proposed. One of the most popular choices, the Extended Kalman Filter (EKF), was applied to relative navigation filters in LEO [43]. We will demonstrate how to use the EKF for relative spacecraft state estimation in Chapter 12.

The discrete EKF is as a state estimator for systems whose state dynamics model, measurement model, or both may be nonlinear, as in Eqs. (3.48) and (3.53) [81]. The dynamics model provides the equations to propagate xˆk, the estimate of the state x at time k, to time step k+1, producing xˆk+1. The measurement model then incorporates the new sensor information to update this estimate, updating the a priori estimate xˆk+1− to the a posteriori estimate, xˆk+1+. This process is illustrated in Fig. 3.1.

FIGURE 3.1. A Kalman filter process.

The continuous state x is governed by the dynamics

(3.48)ẋ(tk)=f(x,u,tk)+w(tk)

where u is a known control input, and w(t) is an additive white noise that models the error accumulated by uncertainty in the dynamics during the time step. The power spectral density of this zero mean, white noise process is

(3.49)Q=E[w(t)w(t)T]

To proceed, linear expressions for the dynamics and measurement equations must be formed. In general, this requires knowledge of the probability density function [81], but the EKF approximates the nonlinear function by expanding it in a Taylor series, at each time step, about the current estimate,

(3.50)Fk=∂f∂x|x=xˆk

The dynamics are discretized with time step Δt by forming the state transition matrix,

(3.51)Φk=eFkΔt

The cumulative effect of the white noise process w(t) over the time step is captured in the discrete process noise covariance matrix

(3.52)Qk=∫0ΔteFkτQ(eFkτ)Tdτ

The vector of measurements, y,

(3.53)y=h(x,t)+vk

is modeled as a nonlinear function of the state and time, with an additive white noise process v(t) that accounts for uncertainty in the sensors and their models. The measurement noise covariance matrix is defined by

(3.54)Rk=E[vkvkT]

The nonlinear measurement equation is also linearized about the current estimate,

(3.55)Hk=∂h∂x|x=xˆk−

Because approximations must be made in the linearization, the EKF is a suboptimal filter, in the sense that its stability and performance are not guaranteed. Fortunately, the dynamics of orbital motion are fairly simple, and the EKF can have very good performance in space navigation applications. The discrete, linear representation of the system dynamics are

(3.56)xk=Φk−1xk−1+wk−1+uk−1

The confidence in the current estimate is captured in the state error covariance matrix, P,

(3.57)Pk=E[x̃kx̃kT]=E[(xˆk−xk)(xˆk−xk)T]

where x̃k=xˆk−xk is the estimation error. The first step in the EKF involves propagating the state and error covariance forward in time. Equation (3.56), with zero process noise, is used to propagate the state estimate. The error covariance is propagated forward using

(3.58)Pk−=Φk−1Pk−1+Φk−1T+Qk−1

An alternate approach to the time propagation step involves using the nonlinear dynamics equations to propagate the state. A 4th-order Runge–Kutta integration scheme uses the nonlinear state dynamics equation

(3.59)xˆ̇(t)=f(xˆ(t),u(t))for t=tk−1→tk

to find xˆk. The state covariance is still propagated with Eq. (3.58), so the state transition matrix Φk−1 must be calculated regardless of whether the linear or nonlinear state propagation is chosen.

The second step of the filter uses the measurement equation to update the a priori state xˆk− to the a posteriori state xˆk+. When a measurement becomes available, the new information provided by the measurement and the previous information captured in the state estimate are combined to form an updated state estimate. The Kalman gain K is the blending gain matrix that is used to weight the importance of the old and new information. The optimum gain matrix is formulated by minimizing the trace of the a posteriori state error covariance matrix Pk+, which essentially minimizes the estimation error vector at each time step [81]. The terms in the gain matrix equation include the previous state estimate, the linearized measurement matrix, and the expected noise of the new measurements,

(3.60)Kk=Pk−HkT(HkPk−HkT+Rk)−1

The nonlinear measurement equation is used to update the state estimate

(3.61)xˆk+=xˆk−−Kk(yk−hk(xˆk−))

Note that the computation of the gain matrix Kk requires the linear measurement matrix Hk. The covariance is updated after the measurement with

(3.62)Pk+=(I−KkHk)Pk−(I−KkHk)T+KkRkKkT

which is the Joseph form of the covariance update whose inherent symmetry makes it numerically stable [82].

Read full chapter

URL:

https://www.sciencedirect.com/science/article/pii/B9780750685337002086

Static State Estimation

Soliman Abdel-hady Soliman Ph.D., Ahmad M. Al-Kandari Ph.D., in Electrical Load Forecasting, 2010

2.3 Properties of Least Error Squares Estimation

Least error squares estimation results are easy to compute and possess a number of interesting properties. The least squares are the best estimates (maximum likelihood) when the measurement errors follow a Gaussian or normal distribution and the weighting matrix is equal to the inverse of the error covariance matrix. The least error squares estimates can be easily calculated.

Where the measurement error distribution does not follow a Gaussian distribution and the number of measurements greatly exceeds the number of unknown parameters, the method of least error squares yields very good estimates. However, there are many estimation problems for which the error distribution is not a Gaussian and the number of measurements does not greatly exceed the number of unknown parameters. In such cases, the least error squares estimations are adversely affected by bad data. This problem has been recognized and addressed by several researchers who have proposed different ways of refining the least error squares method to make estimation less affected by the presence of bad data. In the next section, we discuss an alternative technique to the LES estimation. This technique is based on least absolute value approximation.

Read full chapter

URL:

https://www.sciencedirect.com/science/article/pii/B9780123815439000026

Principles of meteorology and numerical weather prediction

Sue Ellen Haupt, … Branko Kosović, in Renewable Energy Forecasting, 2017

1.2.2.2 Variational assimilation

Variational assimilation techniques are a form of statistical interpolation. All statistical interpolation techniques require an estimation of the error covariances between variables in the background state, as well as error covariances between the observed variables. These techniques find the optimal analysis by globally minimizing a cost function that incorporates the distance between the analysis and observations within the assimilation window. This method also requires the observation error covariance matrix and the background error covariance matrix (e.g., Talagrand, 1997; Kalnay, 2003), which at times may be difficult to compute accurately. In three-dimensional variational DA (3D-Var) schemes, these error covariance matrices come from a static climatology, and all observations within a given assimilation window are assumed to be valid at the analysis time. These assumptions reduce the computational burden. In contrast, four-dimensional variational DA (4D-Var) schemes seek to minimize the cost function, subject to the NWP model equations, to find the best model trajectory through the entire assimilation window, rather than just at the analysis time. In addition, the error covariance matrices are flow-dependent in 4D-Var. These differences make 4D-Var significantly more computationally intensive than 3D-Var, but also more accurate (e.g., Klinker et al., 2000; Yang et al., 2009).

Read full chapter

URL:

https://www.sciencedirect.com/science/article/pii/B9780081005040000019

Smart grids: control and cybersecurity

Magdi S. Mahmoud, … Mutaz M. Hamdan, in Cyberphysical Infrastructures in Power Systems, 2022

2.2.3 Cyberattack minimization in smart grids

A recursive KF estimator (RKFE) is constructed to operate on observation information in order to produce the optimal state estimation. The forecasted system-state estimate is expressed as follows:

(2.8)xˆr(k)=Adxˆ(k−1)+Bdu(k−1),

where xˆ(k−1) is the previous state estimate. Then the forecasted error covariance matrix is given by

(2.9)Pr(k)=AdP(k−1)AdT+Qw(k−1),

where P(k−1) is the previously estimated error covariance matrix. The observation innovation residual d(k) is given by

(2.10)d(k)=yrd(k)−Cxˆr(k),

where yrd(k) is the dequantized and demodulated output bit sequence. The Kalman gain matrix can be written as

(2.11)K(k)=Pr(k)CT[CPr(k)CT+Rv(k)]−1.

This yields the updated state estimation as

(2.12)xˆ(k)=xˆr(k)−K(k)d(k),

along with the updated estimated error-covariance matrix

(2.13)P(k)=Pr(k)−K(k)CPr(k).

After estimating the system state, the proposed control strategy is applied for regulating the MG states as shown in the next section.

Read full chapter

URL:

https://www.sciencedirect.com/science/article/pii/B9780323852616000117

$begingroup$

How is the var/cov error matrix calculated by statistical analysis packages in practice?

This idea is clear to me in theory. But not in practice. I mean, if I have a vector of random variables $textbf{X}=(X_{1}, X_{2}, ldots, X_{n})^top$, I understand that the variance/covariance matrix $Sigma$ will be given the external product of the deviance-from-the-mean vectors: $Sigma=mathrm{E}left[(textbf{X}-mathrm{E}(textbf{X}))(textbf{X}-mathrm{E}(textbf{X}))^topright]$.

But when I have a sample, the errors of my observations are not random variables. Or better, they are, but only if I take a number of identical samples from the same population. Otherwise, they’re given. So, again my question is: how can a statistical package produce a var/cov matrix starting from a list of observations (i.e. a sample) supplied by the researcher?

asked Jun 24, 2013 at 14:01

$endgroup$

2

$begingroup$

The covariance matrix for a model of the type $y = Xbeta + epsilon$ is usually computed as $$(X^t X)^{-1}frac{sigma^2}{d}$$ where $sigma^2$ is the residual sum of squares, $sigma^2=sum_i (y_i — X_ihatbeta)^2$ and $d$ is the degrees of freedom (typically the number of observations minus the number of parameters).

For robust and or clustered standard errors, the product $X^t X$ is modified slightly. There may also be other ways to calculate the covariance matrix, e.g. as suggested by the expectation of outer products.

answered Jul 24, 2013 at 16:40

Simen GaureSimen Gaure

7145 silver badges7 bronze badges

$endgroup$

1

$begingroup$

- OLS estimation of the error variance, $sigma^2$:

$$s^2=frac{hat varepsilon^tophat varepsilon}{n-p}$$

This is included in Practical Regression and Anova using R

by Julian J. Faraway, page 21

.

Example of its calculation in R, based on linear model of miles-per-gallon regressed on multiple car model specs included in the mtcars database: ols = lm(mpg ~ disp + drat + wt, mtcars). These are the manual calculations and the output of the lm() function:

> rdf = nrow(X) - ncol(X) # Residual degrees of freedom

> s.sq = as.vector((t(ols$residuals) %*% ols$residuals) / rdf)

> # s square (OLS estimate of sigma square)

> (sigma = sqrt(s.sq)) # Residual standar error

[1] 2.950507

> summary(ols)

Call:

lm(formula = mpg ~ disp + drat + wt, data = mtcars)

...

Residual standard error: 2.951 on 28 degrees of freedom

- Variance — Covariance matrix of the estimated coefficients, $hat beta$:

$$mathrm{Var}left[hat beta mid X right] =sigma^2 left(X^top Xright)^{-1}$$

estimated as in page 8 of this online document as

$$hat{mathrm{Var}}left[hat beta mid X right] =s^2 left(X^top Xright)^{-1}$$

> X = model.matrix(ols) # Model matrix X

> XtX = t(X) %*% X # X transpose X

> Sigma = solve(XtX) * s.sq # Variance - covariance matrix

> all.equal(Sigma, vcov(ols)) # Same as built-in formula

[1] TRUE

> sqrt(diag(Sigma)) # Calculated Std. Errors of coef's

(Intercept) disp drat wt

7.099791769 0.009578313 1.455050731 1.217156605

> summary(ols)[[4]][,2] # Output of lm() function

(Intercept) disp drat wt

7.099791769 0.009578313 1.455050731 1.217156605

answered Jul 25, 2018 at 3:56

Antoni ParelladaAntoni Parellada

25.2k16 gold badges110 silver badges212 bronze badges

$endgroup$

$begingroup$

With linear regression we are fitting a model $Y = beta*X +varepsilon$. $Y$ is the dependent variable, the $X$’s are the predictor (explanatory) variables. We use the data provided to us (the training set or the sample) to estimate the population $beta$’s. The $X$’s are not considered random variables. The $Y$’s are random because of the error component.

answered Jun 24, 2013 at 15:05

$endgroup$

4

Least Squares Estimation

-

Ordinary Least Squares

-

Covariance-Weighted Least Squares

-

Error Covariance Estimation

-

Feasible Generalized Least Squares

-

Panel Corrected Standard Errors

Ordinary Least Squares

When you fit multivariate linear regression models using mvregress, you can use the optional name-value pair 'algorithm','cwls' to choose least squares estimation. In this case, by default, mvregress returns ordinary least squares (OLS) estimates using Σ=Id. Alternatively, if you specify a covariance matrix for weighting, you can return covariance-weighted least squares (CWLS) estimates. If you combine OLS and CWLS, you can get feasible generalized least squares (FGLS) estimates.

The OLS estimate for the coefficient vector is the vector b that minimizes

Let y denote the nd-by-1 vector of stacked d-dimensional responses, and X denote the nd-by-K matrix of stacked design matrices. The K-by-1 vector of OLS regression coefficient estimates is

This is the first mvregress output.

Given Σ=Id (the mvregress OLS default), the variance-covariance matrix of the OLS estimates is

This is the fourth mvregress output. The standard errors of the OLS regression coefficients are the square root of the diagonal of this variance-covariance matrix.

If your data is not scaled such that Σ=σ2Id, then you can multiply the mvregress variance-covariance matrix by the mean squared error (MSE), an unbiased estimate of σ2. To compute the MSE, return the n-by-d matrix of residuals, E (the third mvregress output). Then,

where ei=(yi−Xiβ)′ is the ith row of E.

Covariance-Weighted Least Squares

For most multivariate problems, an identity error covariance matrix is insufficient, and leads to inefficient or biased standard error estimates. You can specify a matrix for CWLS estimation using the optional name-value pair argument covar0, for example, an invertible d-by-d matrix named C0. Usually, C0 is a diagonal matrix such that the inverse matrix C0−1 contains weights for each dimension to model heteroscedasticity. However, C0 can also be a nondiagonal matrix that models correlation.

Given C0, the CWLS solution is the vector b that minimizes

In this case, the K-by-1 vector of CWLS regression coefficient estimates is

This is the first mvregress output.

If Σ=C0, this is the generalized least squares (GLS) solution. The corresponding variance-covariance matrix of the CWLS estimates is

This is the fourth mvregress output. The standard errors of the CWLS regression coefficients are the square root of the diagonal of this variance-covariance matrix.

If you only know the error covariance matrix up to a proportion, that is, Σ=σ2C0, you can multiply the mvregress variance-covariance matrix by the MSE, as described in Ordinary Least Squares.

Error Covariance Estimation

Regardless of which least squares method you use, the estimate for the error variance-covariance matrix is

where E is the n-by-d matrix of residuals. The ith row of E is ei=(yi−Xib)′.

The error covariance estimate, Σ^, is the second mvregress output, and the matrix of residuals, E, is the third output. If you specify the optional name-value pair 'covtype','diagonal', then mvregress returns Σ^ with zeros in the off-diagonal entries,

Feasible Generalized Least Squares

The generalized least squares estimate is the CWLS estimate with a known covariance matrix. That is, given Σ is known, the GLS solution is

with variance-covariance matrix

In most cases, the error covariance is unknown. The feasible generalized least squares (FGLS) estimate uses Σ^ in place of Σ. You can obtain two-step FGLS estimates as follows:

-

Perform OLS regression, and return an estimate Σ^.

-

Perform CWLS regression, using C0=Σ^.

You can also iterate between these two steps until convergence is reached.

For some data, the OLS estimate Σ^ is positive semidefinite, and has no unique inverse. In this case, you cannot get the FGLS estimate using mvregress. As an alternative, you can use lscov, which uses a generalized inverse to return weighted least squares solutions for positive semidefinite covariance matrices.

Panel Corrected Standard Errors

An alternative to FGLS is to use OLS coefficient estimates (which are consistent) and make a standard error correction to improve efficiency. One such standard error adjustment—which does not require inversion of the covariance matrix—is panel corrected standard errors (PCSE) [1]. The panel corrected variance-covariance matrix for OLS estimates is

The PCSE are the square root of the diagonal of this variance-covariance matrix. Fixed Effects Panel Model with Concurrent Correlation illustrates PCSE computation.

Maximum Likelihood Estimation

-

Maximum Likelihood Estimates

-

Standard Errors

Maximum Likelihood Estimates

The default estimation algorithm used by mvregress is maximum likelihood estimation (MLE). The loglikelihood function for the multivariate linear regression model is

The MLEs for β and Σ are the values that maximize the loglikelihood objective function.

mvregress finds the MLEs using an iterative two-stage algorithm. At iteration m + 1, the estimates are

and

The algorithm terminates when the changes in the coefficient estimates and loglikelihood objective function are less than a specified tolerance, or when the specified maximum number of iterations is reached. The optional name-value pair arguments for changing these convergence criteria are tolbeta, tolobj, and maxiter, respectively.

Standard Errors

The variance-covariance matrix of the MLEs is an optional mvregress output. By default, mvregress returns the variance-covariance matrix for only the regression coefficients, but you can also get the variance-covariance matrix of Σ^ using the optional name-value pair 'vartype','full'. In this case, mvregress returns the variance-covariance matrix for all K regression coefficients, and d or d(d + 1)/2 covariance terms (depending on whether the error covariance is diagonal or full).

By default, the variance-covariance matrix is the inverse of the observed Fisher information matrix (the 'hessian' option). You can request the expected Fisher information matrix using the optional name-value pair 'vartype','fisher'. Provided there is no missing response data, the observed and expected Fisher information matrices are the same. If response data is missing, the observed Fisher information accounts for the added uncertainty due to the missing values, whereas the expected Fisher information matrix does not.

The variance-covariance matrix for the regression coefficient MLEs is

evaluated at the MLE of the error covariance matrix. This is the fourth mvregress output. The standard errors of the MLEs are the square root of the diagonal of this variance-covariance matrix.

For Σ^, let θ denote the vector of parameters in the estimated error variance-covariance matrix. For example, if d = 2, then:

-

If the estimated covariance matrix is diagonal, then θ=(σ^12,σ^22).

-

If the estimated covariance matrix is full, then θ=(σ^12,σ^12,σ^22).

The Fisher information matrix for θ, I(θ), has elements

where nθ is the length of θ (either d or d(d + 1)/2). The resulting variance-covariance matrix is

When you request the full variance-covariance matrix, mvregress returns (as the fourth output) the block diagonal matrix

Missing Response Data

-

Expectation/Conditional Maximization

-

Observed Information Matrix

Expectation/Conditional Maximization

If any response values are missing, indicated by NaN, mvregress uses an expectation/conditional maximization (ECM) algorithm for estimation (if enough data is available). In this case, the algorithm is iterative for both least squares and maximum likelihood estimation. During each iteration, mvregress imputes missing response values using their conditional expectation.

Consider organizing the data so that the joint distribution of the missing and observed responses, denoted y˜ and y respectively, can be written as

Using properties of the multivariate normal distribution, the conditional expectation of the missing responses given the observed responses is

Also, the variance-covariance matrix of the conditional distribution is

At each iteration of the ECM algorithm, mvregress uses the parameter values from the previous iteration to:

-

Update the regression coefficients using the combined vector of observed responses and conditional expectations of missing responses.

-

Update the variance-covariance matrix, adjusting for missing responses using the variance-covariance matrix of the conditional distribution.

Finally, the residuals that mvregress returns for missing responses are the difference between the conditional expectation and the fitted value, both evaluated at the final parameter estimates.

If you prefer to ignore any observations that have missing response values, use the name-value pair 'algorithm','mvn'. Note that mvregress always ignores observations that have missing predictor values.

Observed Information Matrix

By default, mvregress uses the observed Fisher information matrix (the 'hessian' option) to compute the variance-covariance matrix of the regression parameters. This accounts for the additional uncertainty due to missing response values.

The observed information matrix includes contributions from only the observed responses. That is, the observed Fisher information matrix for the parameters in the error variance-covariance matrix has elements

where Σ^i is the subset of Σ^ corresponding to the observed responses in yi.

For example, if d = 3, but yi2 is missing, then

The observed Fisher information for the regression coefficients has similar contributions from the design and covariance matrices.

References

[1] Beck, N. and J. N. Katz. «What to Do (and Not to Do) with Time-Series-Cross-Section Data in Comparative Politics.» American Political Science Review, Vol. 89, No. 3, pp. 634–647, 1995.

See Also

mvregress | mvregresslike

Related Examples

- Set Up Multivariate Regression Problems

- Multivariate General Linear Model

- Fixed Effects Panel Model with Concurrent Correlation

- Longitudinal Analysis

More About

- Multivariate Linear Regression

Open access peer-reviewed chapter

Submitted: August 14th, 2017 Reviewed: October 26th, 2017 Published: December 20th, 2017

DOI: 10.5772/intechopen.71960

From the Edited Volume

Kalman Filters

Edited by Ginalber Luiz de Oliveira Serra

Abstract

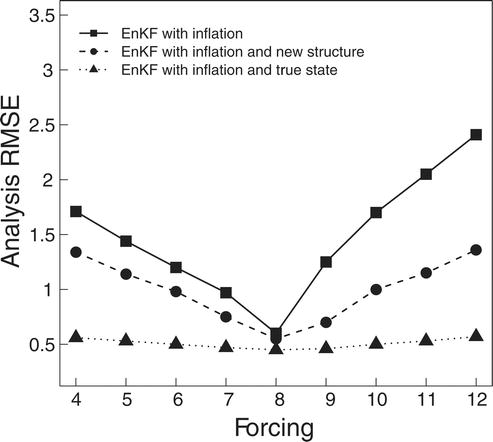

The estimation accuracy of ensemble forecast errors is crucial to the assimilation results for all ensemble-based schemes. The ensemble Kalman filter (EnKF) is a widely used scheme in land surface data assimilation, without using the adjoint of a dynamical model. In EnKF, the forecast error covariance matrix is estimated as the sampling covariance matrix of the ensemble forecast states. However, past researches on EnKF have found that it can generally lead to an underestimate of the forecast error covariance matrix, due to the limited ensemble size, as well as the poor initial perturbations and model error. This can eventually result in filter divergence. Therefore, using inflation to further adjust the forecast error covariance matrix becomes increasingly important. In this chapter, a new structure of the forecast error covariance matrix is proposed to mitigate the problems with limited ensemble size and model error. An adaptive procedure equipped with a second-order least squares method is applied to estimate the inflation factors of forecast and observational error covariance matrices. The proposed method is tested on the well-known atmosphere-like Lorenz-96 model with spatially correlated observational systems. The experiment results show that the new structure of the forecast error covariance matrix and the adaptive estimation procedure lead to improvement of the analysis states.

Keywords

- data assimilation

- ensemble Kalman filter

- error covariance inflation

- observation-minus-forecast residual

- least squares

-

Guocan Wu*

- College of Global Change and Earth System Science, Beijing Normal University, China

- Joint Center for Global Change Studies, China

-

Xiaogu Zheng

- Key Laboratory of Regional Climate-Environment Research for East Asia, Institute of Atmospheric Physics, Chinese Academy of Sciences, China

*Address all correspondence to: gcwu@bnu.edu.cn

1. Introduction

For state variables in geophysical research fields, a common assumption is that systems have “true” underlying states. Data assimilation is a powerful mechanism for estimating the true trajectory based on the effective combination of a dynamic forecast system (such as a numerical model) and observations [1]. It can produce an optimal combination of model outputs and observations [2]. The combined result is called analysis state, which should be closer to the true state than either the model forecast or the observation is. In fact, the analysis state can generally be treated as the weighted average of the model forecasts and observations, while the weights are approximately proportional to the inverse of the corresponding covariance matrices [3]. Therefore, the results of any data assimilation depend crucially on the estimation accuracy of the forecast and observational error covariance matrices [4]. If these matrices are estimated correctly, then the analysis states can be generated by minimizing an objective function which is technically straightforward and can be accomplished using existing engineering solutions [5], although finding the appropriate analysis state is still a quite difficult problem when the models are nonlinear [6, 7].

The ensemble Kalman filter (EnKF) is a widely used sequential data assimilation approach, which has been studied and applied since it is proposed by Evensen [8]. It is a practical ensemble-based assimilation scheme that estimates the forecast error covariance matrix using a Monte Carlo method with the short-term ensemble forecast states [9]. In EnKF, the forecast error covariance matrix is estimated as the sampling covariance matrix of the ensemble forecast states, which is usually underestimated due to the limited ensemble size and model error [10]. This finding indicates that the filter is over reliant on the model forecasts and excludes the observations. It may eventually lead to the divergence of the EnKF assimilation scheme [11, 12].

Therefore, the forecast error covariance inflation technique to address this problem becomes increasingly important. One of the error covariance matrix inflation techniques is additive inflation, in which a noise is added to the ensemble forecast states that sample the probability distribution of model error [13, 14]. Another widely used error covariance matrix inflation technique is multiplicative inflation, that is, to multiply the matrix by an appropriate factor. It can be used to mitigate filter divergence by inflating the empirical covariance and increasing the robustness of the filter [15].

In early studies of multiplicative inflation, researchers determine the inflation factor by repeated experimentation and choose a value according to their prior knowledge [11]. Hence, such experimental tuning is rather empirical and subjective. It is not appropriate to use the same inflation factor during all the assimilation procedure. Too small or too large an inflation factor will cause the analysis state to over rely on the model forecasts or observations and can seriously undermine the accuracy and stability of the filter. In later studies, the inflation factor is estimated online based on the observation-minus-forecast residual (innovation statistic) [16, 17] with different conditions.

Past work shows that moment estimation can facilitate the calculation by solving an equation of the observation-minus-forecast residual and its realization [18–20]. Maximum likelihood approach can obtain a better estimate of the inflation factor than moment approach, although it must calculate a high-dimensional matrix determinant [21–24]. Bayesian approach assumes a prior distribution for the inflation factor but is limited by spatially independent observational errors [25, 26]. Second-order least square estimation focus on minimizing the second-order least squares (SLS) [27] statistic of the squared observation-minus-forecast residual, which is not very expensive [28–30]. Generalized Cross Validation (GCV) [31, 32] can select a regularization parameter by minimizing the predictive data errors with rotation invariant in a least squares solution [33].

In practice, the observational error covariance matrix may also need to be adjusted, and an approach can be used to simultaneously optimize inflation factors of both forecast and observational error covariance matrices [21]. This approach is based on the optimization of the likelihood function of observation-minus-forecast residual. However, the likelihood function of observation-minus-forecast residual is nonlinear and involves the computationally expensive determinant and inverse of the residual covariance matrix. As compensation, the second-order least squares statistic of the squared observation-minus-forecast residual can be used as the cost function instead. The main advantage of the SLS cost function is that it is a quadratic function of the inflation factors, and therefore, the analytic forms of the estimators of the inflation factors can be easily obtained. Compared with the method based on maximum likelihood estimation method, the computational cost is significantly reduced.

Furthermore, unlike the sampling covariance matrix of the ensemble forecast states used in the conventional EnKF, a new structure of the forecast error covariance matrix is proposed in this chapter. In ideal situation, an ensemble forecast state is assumed as a random vector with the true state as its ensemble mean. Hence, it is should be defined that the ensemble forecast error is the ensemble forecast states minus true state rather than minus their ensemble mean [34]. This is because in a forecast model with large error and limited ensemble size, the ensemble mean of the forecast states can be very far from the true state. Therefore, the sampling covariance matrix of the ensemble forecast states can be very different from the true forecast error covariance matrix. As a result, the estimated analysis state can be substantially inaccurate. However, in reality, the true state is unknown, but the analysis state is a better estimate of the true state than the forecast state. Therefore, the information feedback from the analysis state can be used to revise the forecast error covariance matrix. In fact, the proposed forecast error covariance matrix is a combination of multiplicative and additive inflation. Bai and Li [14] also used the feedback from the analysis state to improve assimilation but in a different way.

This chapter consists of four sections. The EnKF scheme with a new structure of the forecast error covariance matrix and the adaptive estimation procedure is proposed in Section 2. The assimilation results on Lorenz model with a correlated observational system are presented in Section 3. Conclusions and discussion are given in Section 4.

Advertisement

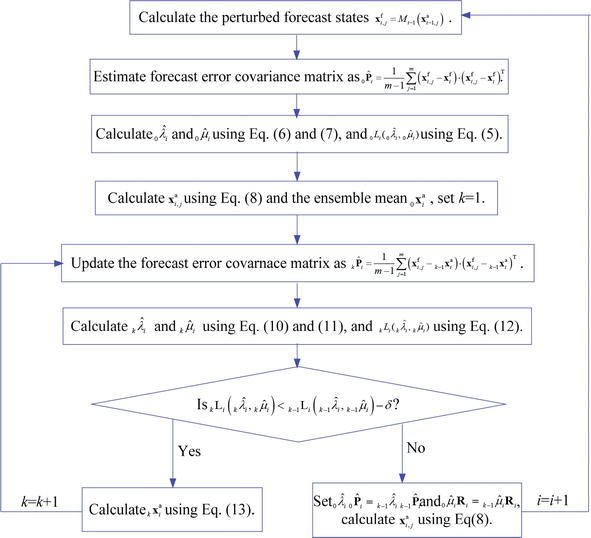

2. Methodology